Benchmark cpu gpu neural network training12/10/2023

The FFT and backslash benchmarks, on the other hand, involve more of a mixture of computation and I/O, so the reported GFLOP rates are lower. The matrix multiplication benchmark is best at measuring pure computation speed, and so it has the highest GFLOP numbers. The report includes three different computational benchmarks: MTimes (matrix multiplication), backslash (linear system solving), and FFT. The report shows the best double precision cards at the top because that is most important for general MATLAB computing. Some cards excel at double precision, and some do better at single precision. The report measures computational speed for both double-precision and single-precision floating point. 1 GFLOP is roughly 1 billion floating point operations per second. You can get it from the MATLAB Central File Exchange. The next thing Ben and I discussed was the output of GPUBench, a GPU performance measurement tool maintained by Ben's team. "The difference between a high end card and a low end card within the same generation often comes down to the number of chips available." GPUBench The MultiprocessorCount is effectively the number of chips on the GPU. There's one other number, though, that might be helpful to you when comparing GPUs. The other information provided by gpuDevice is mostly useful to the developers writing low-level GPU computation routines, or for troubleshooting. The sixth generation is known as Pascal." As of the R2017b release, GPU computing with MATLAB and Parallel Computing Toolbox requires a ComputeCapability of at least 3.0. And ComputeCapability refers to the generation of computation capability supported by this card. "An Index of 1 means that the NVIDIA driver thinks this GPU is the most powerful one installed on your computer. You've got a pretty good GPU there - a lot better than the one I've got, at least for deep learning." (I'll explain this comment below.) I asked Ben to walk me through the output of gpuDevice on my computer.

The function gpuDevice tells you about your GPU hardware.

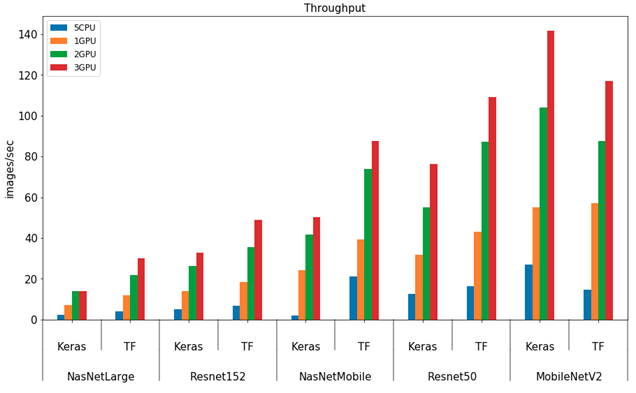

Comparing CPU and GPU speed for deep learning.Getting information about your GPU card.Please contact for any feedback or information. The benchmark is compatible with both TensorFlow 1.x and 2.x versions. GPU with at least 2GB of RAM is required for running inference tests / 4GB of RAM for training tests.

Use_CPU= : if high is selected, the benchmark will execute 10 times more runs for each test. To run inference or training only, use n_inference() or n_training().ĪIBenchmark ( use_CPU =None, verbose_level = 1 ): To run AI Benchmark, use the following code: from ai_benchmark import AIBenchmarkīenchmark = AIBenchmark () results = n ()Īlternatively, on Linux systems you can type ai-benchmark in the command line to start the tests. Note 2: For running the benchmark on Nvidia GPUs, NVIDIA CUDA and cuDNN libraries should be installed first. Note 1: If Tensorflow is already installed in your system, you can skip the first command. If you want to check the performance of Nvidia graphic cards, run the following commands: pip install tensorflow-gpu On systems that do not have Nvidia GPUs, run the following commands to install AI Benchmark: pip install tensorflow The benchmark requires TensorFlow machine learning library to be present in your system. In total, AI Benchmark consists of 42 tests and 19 sections provided below:įor more information and results, please visit the project website: The benchmark is relying on TensorFlow machine learning library, and is providing a lightweight and accurate solution for assessing inference and training speed for key Deep Learning models. AI Benchmark Alpha is an open source python library for evaluating AI performance of various hardware platforms, including CPUs, GPUs and TPUs.

0 Comments

Leave a Reply.AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed